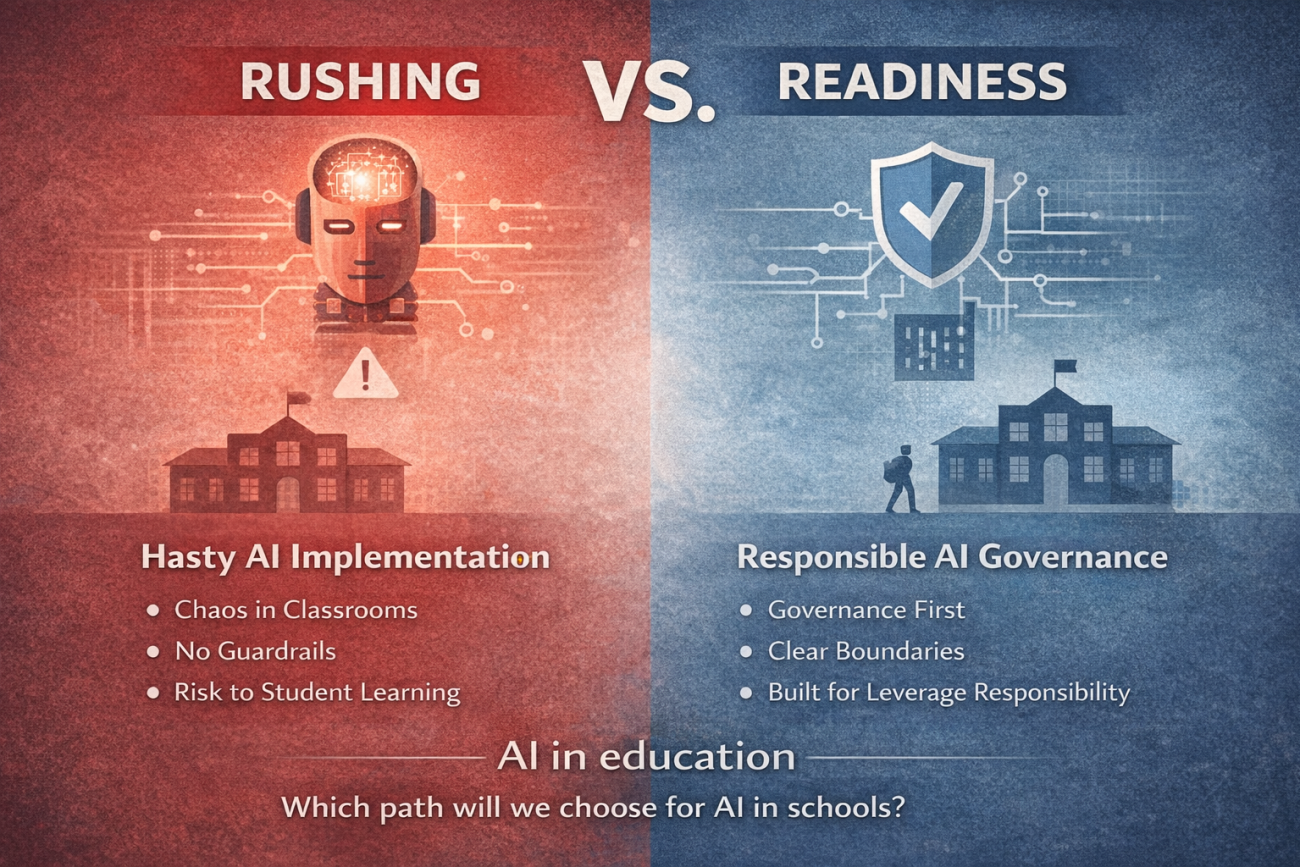

AI is already entering our classrooms, whether we are ready or not. The real risk isn’t being late to adopt—it’s scaling too fast without governance, clear boundaries, and safeguards for how students actually learn. By the time the consequences show up, it may be too late to undo them.

Mauritius has taken an important step by piloting MyGPT in a small number of schools. As discussions around artificial intelligence in education gain momentum, much of the attention has focused on what the technology can do. Far less attention has been paid to how such systems should be integrated into the education system in a way that protects learning, fairness, and long-term outcomes for students.

This distinction matters. Education systems do not fail because technology is weak. They fail when powerful tools are introduced without the institutional foundations required to support them.

Artificial intelligence is not simply another digital resource like a tablet or an online platform. Once embedded in classrooms, it begins to influence how students think, how teachers teach, and how achievement is measured. If these shifts are not carefully managed, the consequences may only become visible years later.

At the heart of responsible integration lies governance. An AI system used in schools must operate within clear boundaries: who controls it, how student data is handled, what the system is permitted to do, and how misuse or harm is addressed. Without such clarity, responsibility becomes blurred. When an AI system provides misleading information or is used inappropriately, it is often unclear who is accountable or how correction should occur.

Governance is not about slowing innovation. It is about ensuring that innovation serves educational goals rather than quietly redefining them. When rules are absent or vague, convenience tends to guide usage, not pedagogy.

Equally critical is the role of teachers. No AI initiative can succeed in education if teachers do not clearly understand how the technology fits within their professional role. When educators are unsure whether AI is meant to support them or replace parts of their work, adoption becomes uneven. Some avoid the tool altogether, while others rely on it too heavily.

This is not simply a matter of training. It is about trust and role clarity. Teachers must remain the central authority in learning, interpretation, and assessment. AI should assist their work, not override their judgment. When teachers feel marginalized, resistance grows. When they feel bypassed, misuse becomes likely.

Assessment presents another serious challenge. Traditional methods of evaluating learning were not designed for a world in which high-quality answers can be generated instantly. If assessment practices remain unchanged, grades risk losing their meaning. Students who rely heavily on AI may appear to perform well, while their actual understanding becomes difficult to measure. At the same time, students who work independently may be unfairly disadvantaged.

This does not mean AI has no place in learning. It means assessment methods must evolve. Greater emphasis must be placed on reasoning, explanation, and the learning process itself, rather than on final outputs alone. Without this shift, confidence in the education system gradually erodes.

Perhaps the most important issue, however, is the need to clearly distinguish between AI as a support tool and AI as a substitute for thinking. Used well, AI can help students understand concepts, explore ideas, and identify gaps in their knowledge. Used poorly, it can remove struggle, decision-making, and reflection from the learning process.

Students do not learn from ready-made answers. They learn from effort, error, and revision. When AI consistently performs these cognitive tasks on their behalf, learning becomes shallow and dependency grows. Over time, this can weaken critical thinking skills and reduce students’ confidence in their own reasoning.

The risks of poorly defined AI use do not appear overnight. They accumulate quietly. Students may become overly reliant on AI support. Peer discussion and collaborative learning may decline. Inequality may widen as students with stronger digital skills benefit more than others.

This is why the current pilot phase is so important. The purpose of a pilot is not to prove that the technology works. Most AI systems already do. The real question is whether the education system is ready to integrate such tools without compromising its core mission.

If Mauritius takes the time to establish strong governance, support teachers, rethink assessment, and clearly define the limits of AI use, MyGPT could become a model of responsible innovation in education. If these foundations are overlooked, the country risks learning the hard lessons later.

Artificial intelligence should not make learning easier. It should make learning deeper, fairer, and more meaningful.

That will only happen if we get the foundations right before we scale.