When an organization deploys a new software platform, the worst-case scenario is typically a temporary loss of productivity. An email server crashes, or a financial dashboard fails to load. The IT department apologizes, a patch is applied, and the business recovers within forty-eight hours.

When an organization deploys unconstrained artificial intelligence, the worst-case scenario is catastrophic, existential corporate liability. A probabilistic model does not simply crash. It confidently makes autonomous decisions that can violate federal compliance laws, systematically discriminate against job applicants, or completely expose proprietary trade secrets to the public domain.

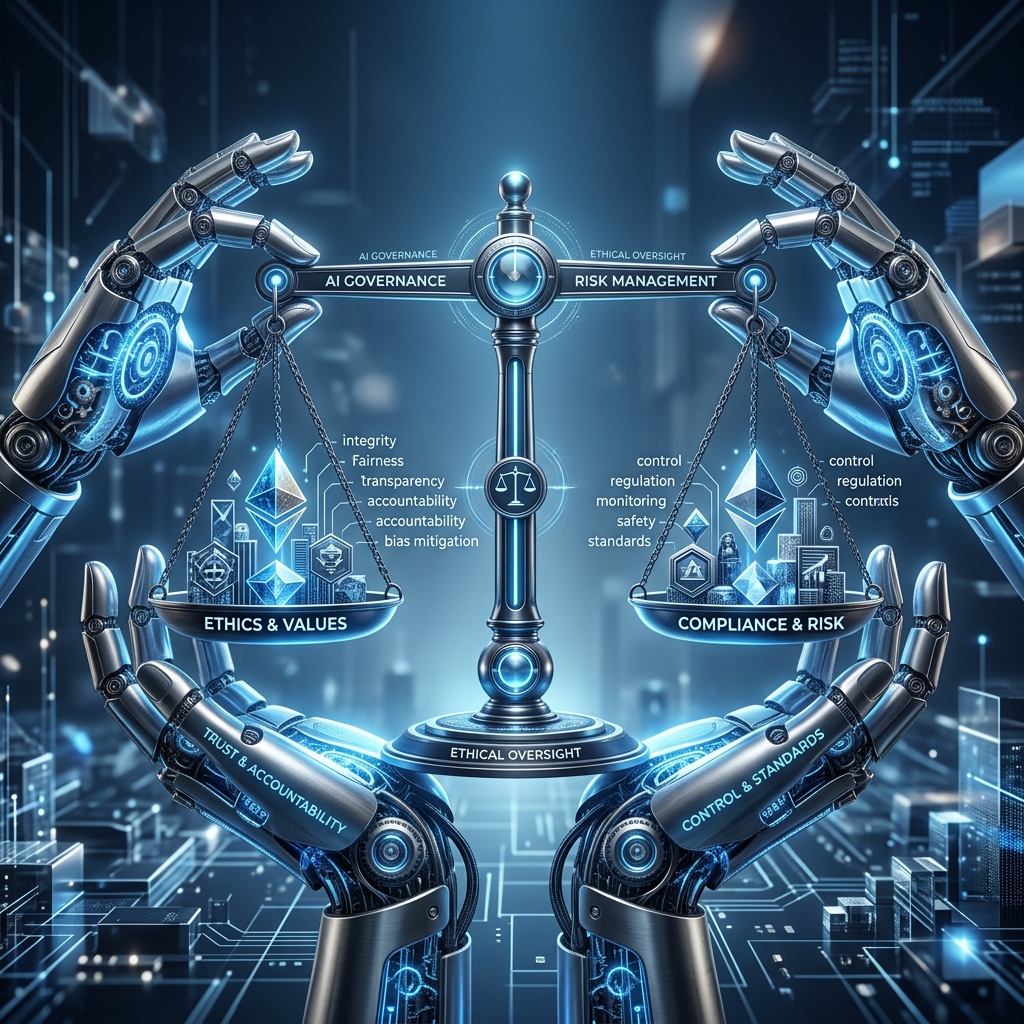

Because the potential damage is exponential, the control structures must be equally aggressive. You cannot rely on the ethical compass of individual engineers, nor can you rely on the vague marketing promises of the software vendor. You must construct a hostile, permanent, and culturally enforced AI Governance framework before a single algorithmic model touches your production data.

The Absolute Allocation of Liability

The foundational failure of modern governance is the assumption that the technology is responsible for its own actions. When a generative chatbot hallucinates a non-existent company policy and a customer sues, corporate defense lawyers inevitably attempt to blame the algorithm. The market and the regulators will never accept this defense.

For a deeper exploration of embedding governance at board level, read The AI Operating Model.

Effective governance begins by formally stripping the machine of all autonomy. A statistical model cannot hold legal liability; only a human being can. Your governance charter must explicitly map every single algorithmic output back to a specific name on the corporate payroll.

If the marketing department deploys a tool designed to dynamically rewrite advertising copy, the Chief Marketing Officer must sign a legal charter accepting total personal and departmental liability for any copyright infringement that tool commits. If a manager is unwilling to sign that document, the organization cannot adopt the tool. By completely destroying the illusion of machine autonomy, you force a baseline level of human paranoia that naturally regulates reckless software deployments.

The Hostile Review Matrix

Governance is not intended to be agile. It is intended to be a highly abrasive barrier that slows down enthusiastic executives.

A functional governance structure requires the formation of a hostile review board. This board must exist entirely outside of the departments actively building or buying the technology. It must be staffed by legal counsel, risk management veterans, and cybersecurity experts whose explicit mandate is to find reasons to block the deployment.

This board does not evaluate the projected return on investment. They evaluate the catastrophic downside. Before any pilot program moves into production, the board forces the proposing department through a hostile risk matrix. They demand documented proof explaining exactly how the model makes its decisions. If the engineering team cannot explain the mathematical vector the model used to approve or deny a vendor contract, the model is rejected as a "black box" risk.

The board also demands a documented fallback procedure. If the model begins showing statistical drift and starts recommending highly toxic financial decisions, how quickly can the organization physically pull the plug and revert to the offline human process? If the answer is longer than thirty minutes, the deployment is blocked.

Defining the Data Boundary

Ethical governance is structurally linked to data hygiene. The most sophisticated accountability framework in the world will fail if the underlying data architecture is porous.

For a deeper exploration of governance readiness as a prerequisite, read AI Readiness Assessment.

Your governance policy must explicitly classify your internal data into rigid tiers. Unrestricted public data exists at the bottom. Sensitive operational metadata exists in the middle. Radioactive proprietary intelligence, such as client financial records or unpatented legal strategy, exists at the top.

The governance board uses these tiers to dictate the technology architecture. Public tier data can be fed into commercial cloud applications safely. Radioactive data is legally forbidden from leaving the premises. If an employee needs algorithmic processing for radioactive data, the governance structure mandates the deployment of localized, air-gapped models running exclusively on internal servers.

You must structurally enforce this rule through network access logs. If a junior analyst attempts to paste a sensitive corporate legal document into a public web interface, the system must immediately flag the violation and freeze their portal access. Governance cannot rely on employee training alone; it must be backed by digital physics.

The Human-in-the-Loop Imperative

As organizations scale their capabilities, the pressure to remove the human entirely from the workflow becomes intense. The financial argument is highly seductive: if you completely eliminate the human supervisor, the operational margins explode.

Your governance framework must explicitly fight this temptation. You must enshrine the "Human-in-the-Loop" architecture as a non-negotiable corporate requirement for any high-stakes interaction. The models operate at incredible speed to retrieve information, synthesize patterns, and draft initial recommendations, but the final execution requires an analog, human signature.

If an algorithm recommends rejecting a commercial loan application, it cannot send the rejection email automatically. It must route the decision path to a senior credit officer, along with a transparent explanation of the variables that drove the recommendation. The officer reviews the logic and formally authorizes the rejection. Will this slow the process down? Absolutely. But the slight reduction in speed buys you an unbreachable wall of corporate liability protection.

Auditing for Structural Drift

Finally, an intelligent system does not remain static. Because it constantly ingests new data, the mathematical weights that drive its logic will inevitably drift over time. An algorithm that behaved safely and ethically during the initial deployment in January may spontaneously develop highly destructive biases by September.

For a deeper exploration of a full executive framework covering all five disciplines, read The Complete Guide to AI Strategy.

A serious governance framework mandates continuous, aggressive mechanical auditing. You cannot audit the inputs once and declare the software permanently safe. The risk compliance team must run scheduled penetration tests against the logic capabilities of the model. They intentionally feed the system highly biased, distorted data to see if the internal guardrails hold. They measure the variance between the model's current recommendations and the historical baseline.

If the model begins demonstrating a statistical drift toward extreme or biased outcomes, the governance board possesses the total authority to freeze the application enterprise-wide until the data science team recodes the parameters.

Building responsible capabilities requires a level of executive discipline that borders on paranoia. If your managers complain that the governance framework is making them move too slowly, your governance framework is acting exactly as designed. The organizations that survive the current technological arms race will not be the ones who moved the fastest. They will be the ones who successfully contained the explosion without burning down the house.